Getting started with Kubernetes in Docker (Kind)

Introduction to Kind

Primarily designed for testing Kubernetes, Kind (Kubernetes inside Docker) is a tool for running local Kubernetes clusters using lightweight Docker container “nodes”.

Production-grade Kubernetes clusters require at least 2 physical/virtual servers (nodes). As Kubernetes proliferated and its tooling improved, Kind came in handier. Especially for use cases that assumed a cluster environment but were resource-constrained or did not have the high resource/availability requirements of multiple servers. For example, Kind enables local development and testing of applications that otherwise need a full-fledged production-grade cluster for deployment.

This article describes what Kind is, how it works under the hood, some common real-world use cases where Kind shines, and finally, how Adaptive leverages functionality provided by Kind to set up bastion hosts and create lightweight environments for teams which do not have an existing Kubernetes environment.

What is Kind?

Kind is a suite of tools designed to rapidly create Kubernetes clusters in a local development environment. It is an ideal option for developers who want to test their Kubernetes applications without having to go through the hassle of provisioning physical or cloud-provided clusters. It replicates the Kubernetes environment, so existing tools that work with Kubernetes work seamlessly with Kind, but without the typical node requirements.

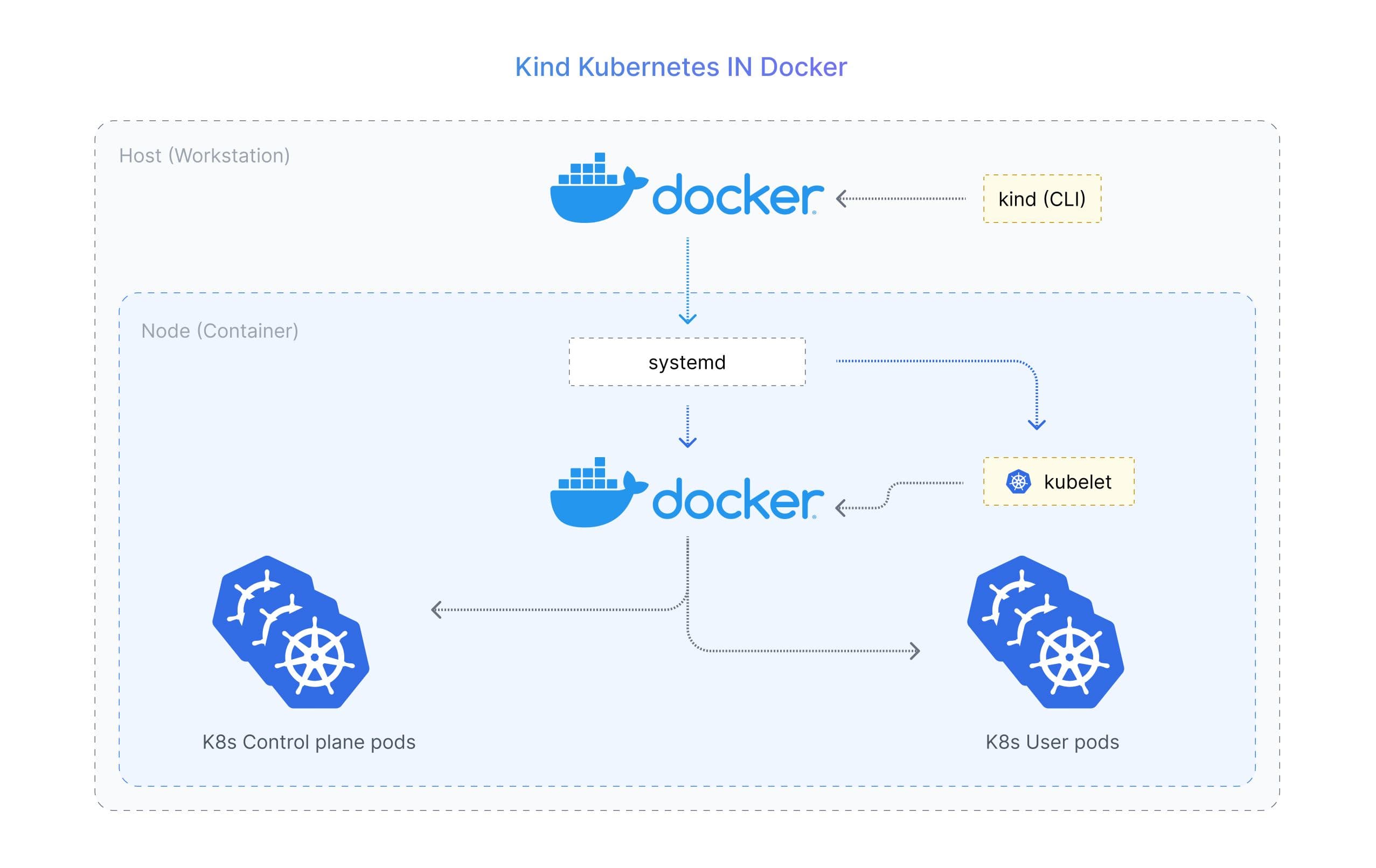

Each “node” in a Kind cluster is a Docker container, which is built on top of a Docker image that contains the necessary files and binaries required to set up nested containers, and a functional Kubernetes runtime.

The ideal use case for creating Kind clusters is for local development, testing, and prototyping, however, it is not suitable for production-level workloads.

Components of Kind

Kind is divided into:

- The command line tool

kindfor end users - A node image for the container that acts like a cluster node. To be able to run Kubernetes inside the container, the image includes k8s binaries on top of a minimal base image required to run k8s. The minimal base image is built using a minimal Ubuntu image and adds packages for -

- Running services (systemd)

- Kubernetes components

- Networked-backed storage with Kubernetes

- Container runtime

- Use by Kind

- for semi-core Kubernetes functionality

- Go packages that implement most of the functionality

How Kind Works?

Kind simplifies the creation of local Kubernetes clusters using Docker containers. By abstracting a cluster node within a Docker container, Kind provides the necessary tooling for this purpose. This abstraction relies on a pattern called "Docker inside Docker" (DinD), which allows you to run Docker containers within another Docker container, thus creating a nested containerization setup. DinD is commonly employed when managing containerized environments, such as building or testing Docker images, which aligns with Kind's functionality in setting up local Kubernetes clusters.

To create a Kubernetes cluster using Kind, the following steps are taken:

- Kind checks if Docker is installed and installs it if necessary.

- It creates a Docker image for the Kubernetes control plane (CP), responsible for managing the cluster.

- Docker images are created for each Kubernetes node, containing the necessary Kubernetes binaries and files. These node images are based on the

kindest/nodeimage, which provides Kubernetes,systemd, and other required binaries for deploying Kubernetes within the container. Thesystemdservice forwards journal logs to the container TTY. - The CP and node containers are then started.

- The Docker containers are interconnected to form the Kubernetes cluster.

Once the Kubernetes cluster is successfully created, Kind provides you with the cluster's name. You can then use the kubectl command to interact with the cluster. Each cluster is identified internally by a Docker object label key, while each node container is identified with cluster-name/ID.

Setting up Kubernetes Cluster with Kind

Kind is a set of go packages and docker images so you need go (1.17+) and docker installed before you can set up kind.

$ go install sigs.k8s.io/kind@v0.18.0

Once set up, create a cluster using

$ kind create cluster

Creating cluster "kind" ...

✓ Ensuring node image (kindest/node:v1.26.3) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-kind"

You can now use your cluster with:

kubectl cluster-info --context kind-kind

Have a question, bug, or feature request? Let us know! https://kind.sigs.k8s.io/#community 🙂

You are now ready to access the cluster from the same host. To share access to the cluster, you need to share the location of the cluster and the credentials to access it. The setup required can be non-trivial.

Creating Multiple Clusters

You can easily create multiple clusters using the —name parameter with the kind create cluster command:

$ kind create cluster --name kind-test-2

Creating cluster "kind-test-2" ...

✓ Ensuring node image (kindest/node:v1.25.3) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-kind-test-2"

You can now use your cluster with:

kubectl cluster-info --context kind-kind-test-2

Thanks for using kind! 😊

You can also use the kind get clusters command to get a listing of all the clusters you have created:

$ kind get clusters

kind

kind-test-2

Deleting Kind Clusters

Kind allows you to delete clusters effortlessly. To delete a cluster, simply use the kind delete cluster command with a —name parameter. Note that if the --name parameter is not provided, kind tries to delete the cluster named kind by default

$ kind delete cluster --name kind-test-2

Deleting cluster "kind-test-2" ...

$ kind delete cluster

Deleting cluster "kind" ...

How can Kind improve Security Posture?

Kind enables the creation of sandbox environments by setting up local Kubernetes clusters specifically for development and testing purposes. These sandbox environments provide isolation where developers can safely experiment, debug, and test their applications without affecting the production infrastructure.

Benefits of Kind as a Security Posture:

- Limits the attack surface as there are fewer entry points for external attackers

- Provides a controlled testing environment for local deployment

- Kind's YAML files make it easier to replicate cluster environments

- Compatible with all Docker-based security tools

Applications of Kind

Local Development

Kind is typically used for the local development of applications that are deployed on a cluster. For e.g., developers working on an application that in production is deployed on a cluster of 3 nodes shouldn’t need to create a local cluster with 3 nodes. With the same configuration files etc. that are used for setting up a 3-node cluster, developers can set up a 3-container cluster on their development machines to develop and test in a near-production environment.

Continuous Integration

Similar to the application above, Kind is used for creating, deploying clusters on the fly for running automated tests, and then destroying the cluster when done. It not only saves the cost of nodes but is also time efficient.

At Adaptive, we leverage Kind for privileged access management.

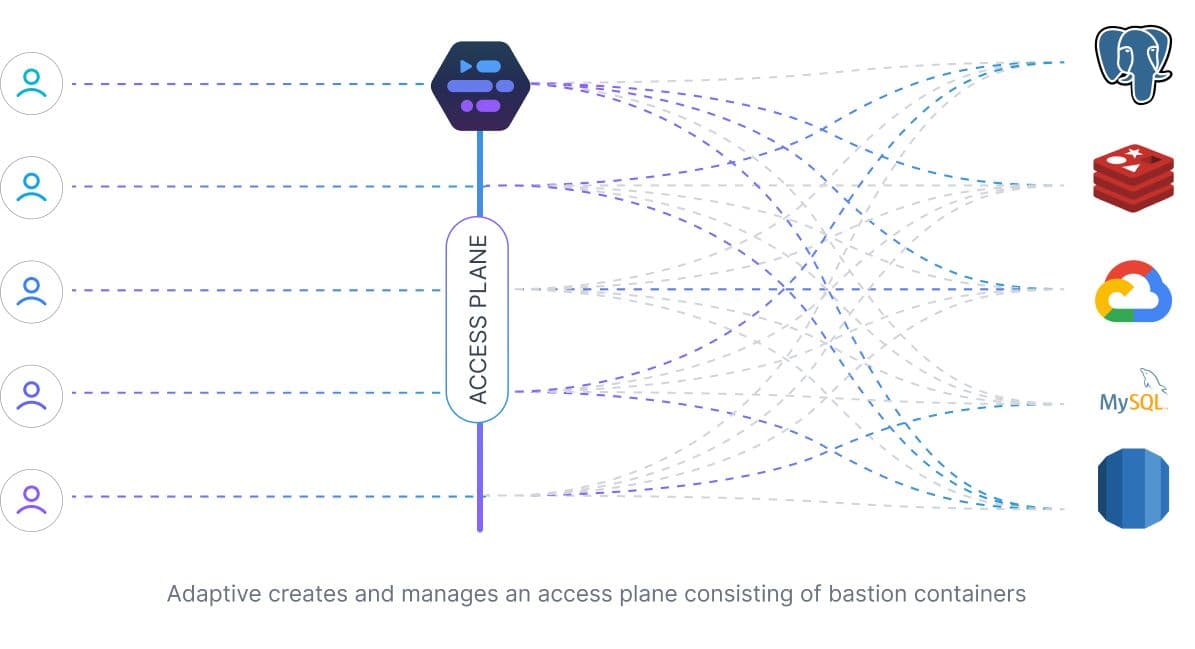

How Adaptive Leverages Kind

Adaptive provides privileged access management to fast-moving teams within organizations. Organizations that have relied on bastion hosts to gate access to their infrastructure can use Adaptive to scale their access management requirements.

Adaptive leverages Kind to set up bastion hosts on the fly for organizations that do not have a K8s setup. Each dynamically created bastion is optimized for accessing a particular infrastructure resource with the necessary pre-installed tooling. With Adaptive, additional access policies such as time to live and user authorization can be configured and enforced on each container.

SOC2 Type II

SOC2 Type II